How is VXLAN implemented when we have more than one site (VXLAN EVPN Multisite)? For example, in an enterprise data center with multiple sites how VXLAN is designed and implemented. This is the question that we want to discuss in this video.

We have three options for implementing VXLAN in multiple sites. Multi-Pod, Multi-Fabric and the third method which is called Multisite.

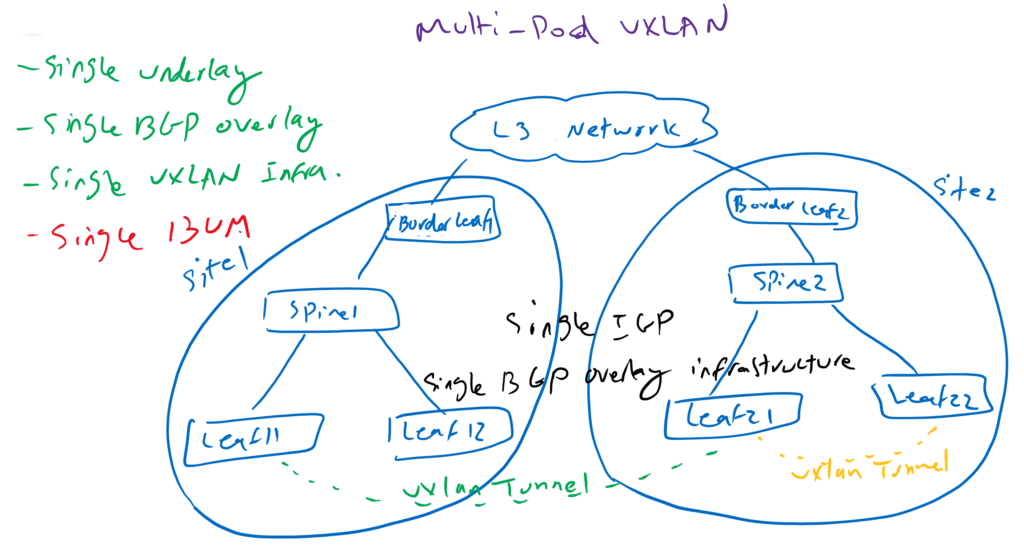

VXLAN Multipod Solution

in Multi-Pod, however, we have multiple sites, but we have single end-to-end IGP underlay and single end-to-end BGP overlay protocol and also single end-to-end VXLAN tunnels. In other words, all sites are assumed to be a single site.

As you can see in our topology, we have two sites connected by border leaf switches and an external L3 network which can be any network including service providers MPLS VPN network or our own WAN infrastructure. but we have a single undelay routing protocol IGP between two locations. at least routes for the loopback address of devices between two sites must be avertised between two sites so that we have end-to-end reachability between the sites. This can be achieved with the undelay BGP IPv4 address family across WAN, which advertises loopback addresses between different sites, or it can be advertised via the provider’s MPLS VPN infrastructure. advertising loopback addresses between two sites enables us to have a single BGP overlay infrastructure across our entire network.

Also we have a single VXLAN infrastructure through our network and there is no difference between VXLAN tunnels in the same site or between sites. Here no difference between yellow tunnel and green tunnel.

When we need end-to-end L2 connectivity between two sites, an end-to-end tunnel between two sites is created. Like what we showed here with the green tunnel.

We also have a single domain for forwarding BUM traffic. In other words, BUM traffic is advertised across the network and is not isolated and limited to local sites, which we actually call the downside of this method.

This method is very easy to understand and implement.

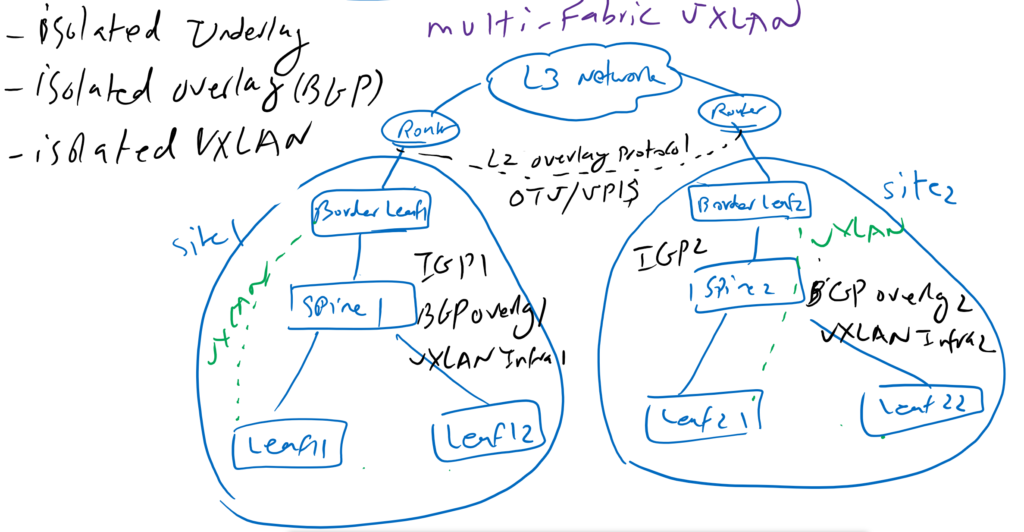

VXLAN Multi-Fabric Solution

In Multi-Fabric unlike Multi-Pod, we have a completely isolated underlay, BGP overlay, VXLAN Infrastructure and BUM domains.

In our topology we have two completely isolated sites connected by border leaf switches and any L3 network. However, the IGP underlay protocol as well as the BGP overlay and the VXLAN infrastructure are completely isolated, but to have end-to-end L2 connectivity we need an L2 infrastructure between sites, which is normally achieved by implementing OTV.

When we need end-to-end L2 connectivity between two sites, our traffic is routed through two VXLAN tunnels and one L2 OTV infrastructure. first tunnel from the source leaf switch to border leaf switches in the source site. Then the traffic passes the L2-OTV tunnel to reach to the border leaf switch at the destination site. Then through another VXLAN tunnel from the border leaf in the destination site and the destination leaf switch which final destination is connected.

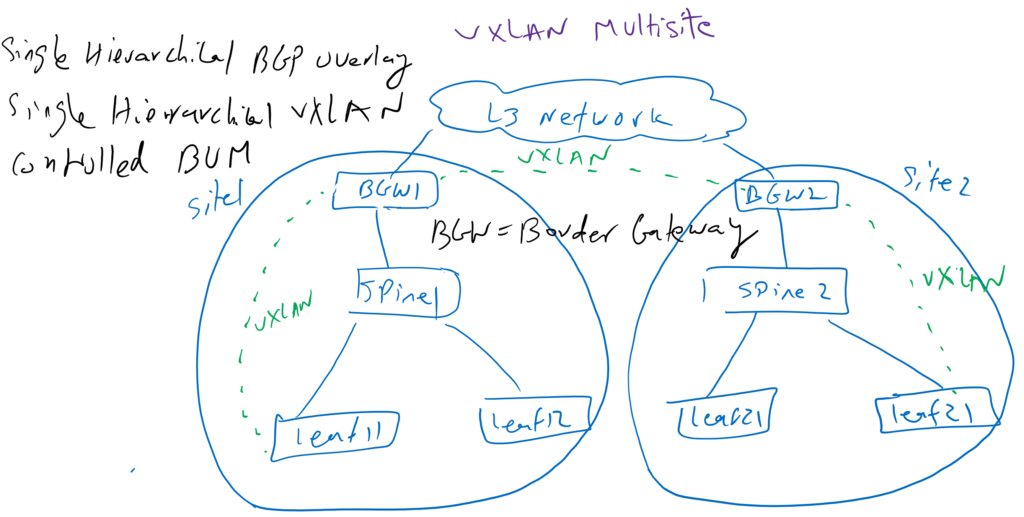

VXLAN Multisite Solution

The third solution is called Multisite which has a uniform hierarchical VXLAN infrastructure. This means to have an end-to-end L2 connectivity, the data traffic is routed through three VXLAN tunnels. First from the leaf switch connected to the source endpoint to the border leaf switch at the source site. then from the border leaf switch at the source site, which ends in the border leaf switch at the destination site and the finally tunnel from the border leaf switch at the destination site to the leaf switch to which the final destination is connected.

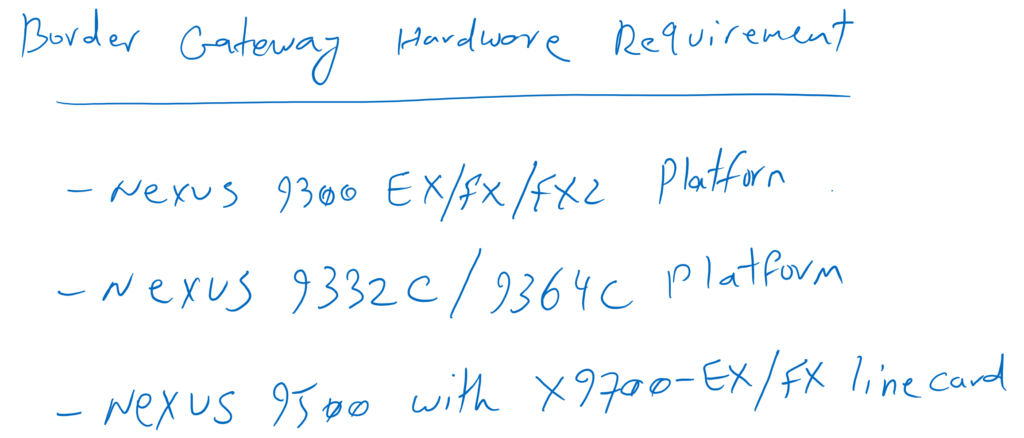

Border Gateway Hardware Requirement

In order to be able to implement this solution, the border-leaf switches must have specific features that make them different from other leaf switches. Leaf switches with hierarchical VXLAN tunneling capability are known as border gateways. The border gateway feature is supported in the Cisco Nexus 9300 EX/FX/FX2, Nexus 9332C, and Nexus 9364C. Border gateway function can also be implemented in spine switches with Nexus 9500 platform and X9700-EX/FX line card.

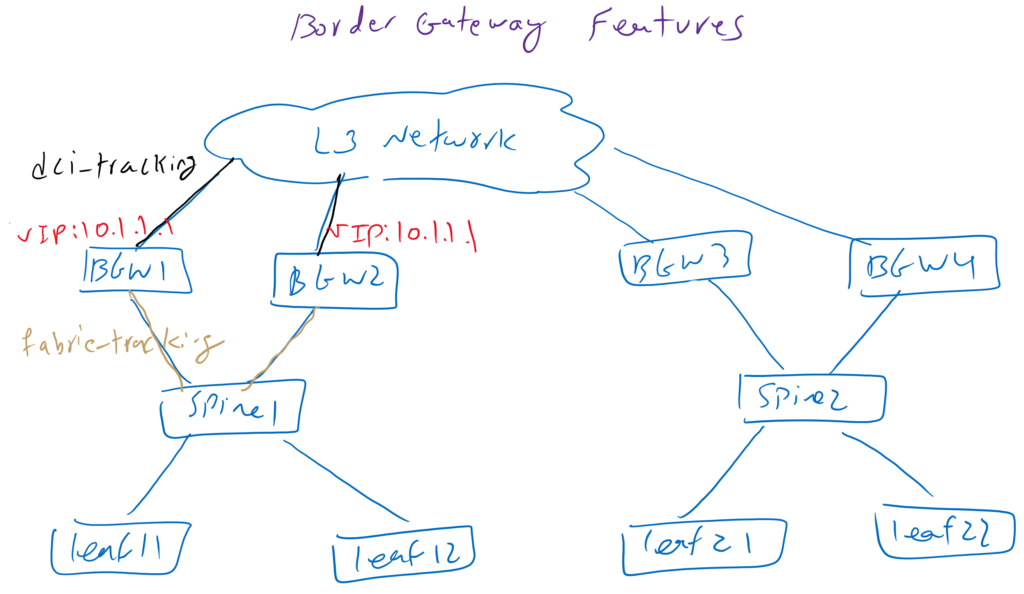

Border Gateway Redundancy

Since border gateways are critical part of VXLAN multisite solution, normally two border gateways are implemented in each site. One virtual IP is shared between two border gateways in each site which will be used as source of VXLAN Tunnel between two sites.

With multisite fabric tracking and DCI tracking in every border gateway, we check the availability of the connection from the border gateway to the fabric or DCI. With these two features, if the connection to the border gateway is interrupted, the virtual IP of this border gateway is automatically shut down so that the VXLAN tunnel is forwarded to another border gateway.

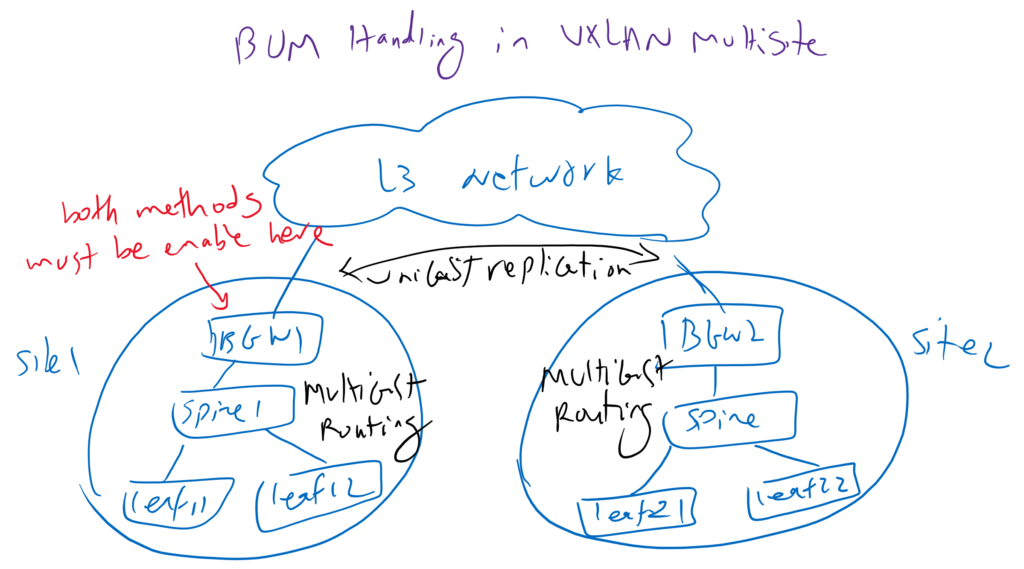

BUM Traffic in VXLAN Multisite Solution

For forwarding BUM traffic in a multi-site environment, we have the same two options, multicast routing and unicast replication. However, since data center interconnection control is not in our hand, we usually cannot implement multicast routing in the data center inter-connection. But we can use the multicast routing method to route the BUM traffic at each site and for data center interconnection we use unicast replication method to route the BUM traffic between sites. So in border gateway switches both multicast routing and unicast replication methods must be enabled per L2 VNI and in interface nve context mode.

VXLAN BUM Handling with Multicast Routing

VXLAN BUM Handling with Unicast Replication

With these concepts discussed in this section, we will learn how to implement a VXLAN multisite solution in the next video.